Guide

Fixture Firmware Rollout Guide for Live Rigs

A production-safe approach to planning, testing, and rolling out fixture firmware updates without introducing show-day control regressions.

Fixture Firmware Rollout Guide for Live Rigs

Purpose and operational constraints

This guide addresses the operational realities of deploying new fixture firmware in live production environments. It frames decisions around risk mitigation for front of house operations, rehearsals, doors, and performance periods. The goal is to deliver a repeatable process that integrates with FOH workflows, backup consoles, and node or gateway behavior to avoid regressions that can interrupt shows.

Defining scope and risk assessment

Start by cataloging all fixture types, their control protocols, and where they live on the rig. Create a matrix that maps fixture model, current firmware, and control path including DMX universes, sACN streams, RDM addresses, and upstream gateways or nodes. Identify impact domains: FOH operator console, backup console, local node/gateway firmware, and house control systems. For each change, record the worst-case visible failure mode during doors and performance, such as loss of intensity, frozen pan/tilt, incorrect color maps, or unexpected channel remapping.

Scheduling windows and escalation paths

Choose deployment windows that respect creative and operational constraints. Typical safe windows are dedicated technical rehearsals, off hours between shows, or preproduction days. Schedule a primary rollout window and at least two escalation windows in case of unexpected regressions. Define an explicit go/no-go criterion for each window: the FOH programmer and stage manager must sign off for production roll during that window. Document an escalation tree with contact details for manufacturer support, lighting director, and automation engineer. Include a plan for activating the backup console and fallback nodes if needed.

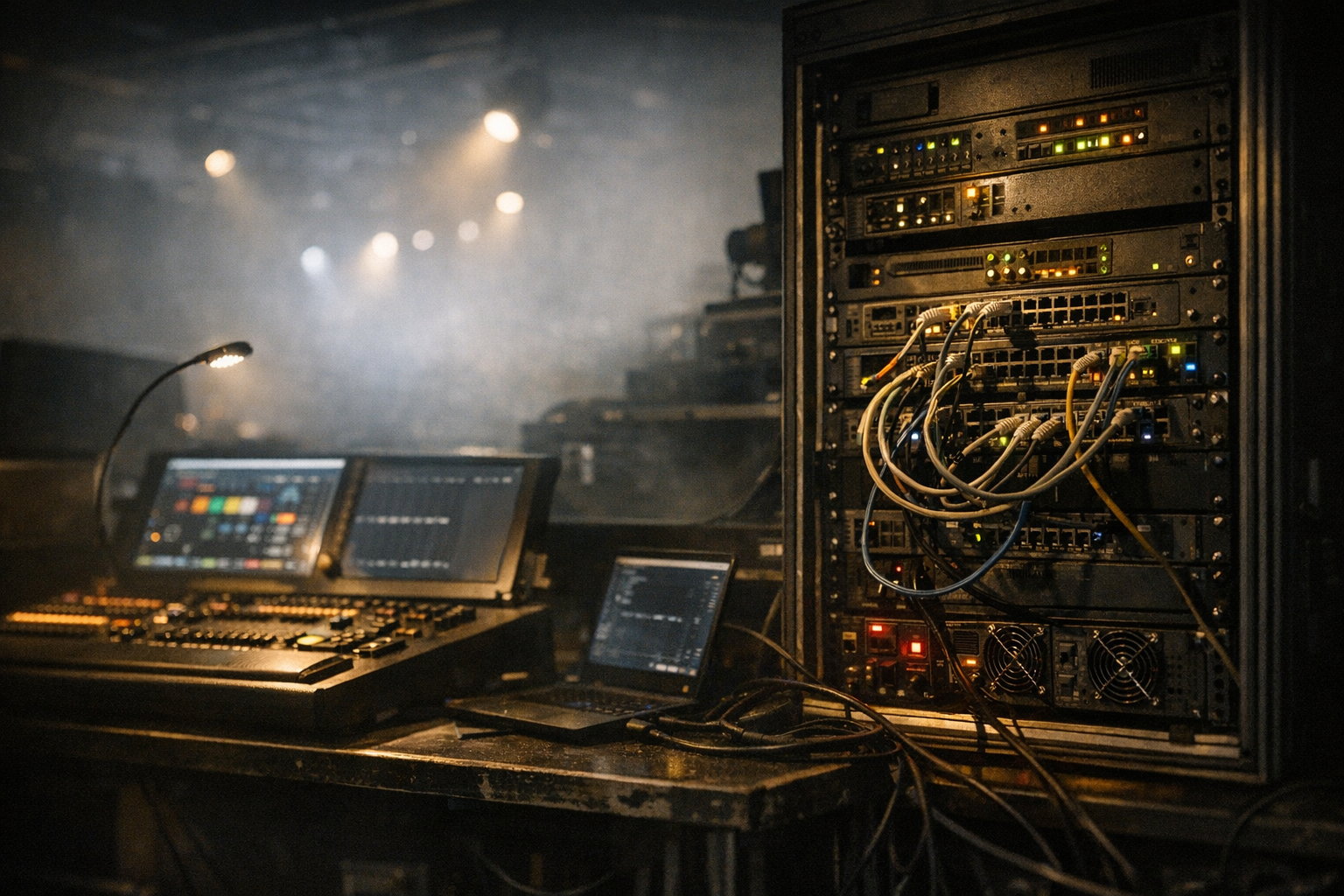

Test rig and lab emulation

Build a representative test rig that mirrors the live rig at a systems level, not necessarily at full scale. The lab should include a FOH console or a functional emulator, a backup console, a gateway or node representative of the live units, and at least one sample of each fixture family. Simulate the network topology including multicast behavior, universe routing, and any ARTNET/sACN bridges. Emulate door events and power cycles so you can observe node/gateway behavior under real-world conditions. Use this bench to validate firmware behavior under network load and to verify any changes in RDM discovery or device responses.

Version control and staging environments

Maintain a firmware repository with clear semantic versioning and release notes. Tag builds with the hardware revisions they target and with any known limitations. Use staged repositories: development, rehearsal, and production. Only promote a firmware build to rehearsal after passing the lab tests; only promote to production after rehearsal validation. Maintain checksums and retain a downloadable copy of the previous firmware so you can restore devices in the field without internet access to manufacturer servers.

Rehearsal integration with FOH and backup consoles

Treat rehearsal as a full systems test that includes the FOH operator and the backup console operator. During rehearsal, exercise full cue stacks, intensity fades, macros, and position moves that will occur during the show. Validate cue timing and ensure that fixture calibration, color balance, and frame timing remain consistent. Test behavior when control is transferred to the backup console, and verify that node/gateway rejoin times and DMX continuity meet production tolerances. Simulate doors behavior and hot re-patching so that load-in or last-minute changes do not create unexpected states.

Rollout strategies: incremental, canary, and group-based

Adopt phased rollout strategies to constrain risk. Choose one of the following depending on rig size and criticality: a canary approach that updates a small, noncritical group of fixtures first; a zone-based update that targets the theatre house left/house right truss; or a role-based update that upgrades only non-moving or non-key fixtures during doors. For each strategy, define acceptance tests that must pass before expanding to the next group. Use group-based addressing on the console to isolate updated fixtures during rehearsal runs and to quickly remove them if a regression appears.

Monitoring, diagnostics, and rollback procedures

Implement real-time monitoring during deployment. Monitor node/gateway logs, RDM discovery responses, and sACN/DMX continuity. Use console diagnostic tools to watch channel values and timing jitter. Define automated health checks that validate channel ranges and pan/tilt motion profiles after firmware application. Prepare rollback procedures that work without network dependencies: carry a local copy of prior firmware, maintain physical access to nodes for local recovery, and script batch restores for common gateway models. Time the rollback acceptance window so FOH can verify functional recovery before doors.

Post-rollout validation, documentation, and lessons learned

After a successful rollout, run a validation pass against the acceptance criteria and produce a concise technical summary for operations. Include the firmware versions applied, fixture serial ranges, observed anomalies, and the exact recovery steps taken if any regressions were encountered. Update rig diagrams, asset management records, and the repository metadata. Capture lessons learned for the next deployment window, focusing on any node/gateway latencies, RDM discovery quirks, or control console behaviors that affected the process.

FAQ

What if a fixture stops responding during doors?

If a fixture fails during doors, prioritize restoring show-safe control. Transfer control to the backup console if it preserves channels and cues. If the issue is isolated to a firmware-updated group, remove that group from the active showfile or patch and use the console to force safe DMX values. If a gateway or node appears to be the failure point, power-cycle that device if it can be done without affecting critical cues; otherwise route around it using spare universes or a prebuilt emergency mapping.

How do I validate node and gateway behavior before a broad rollout?

Run endurance tests in the lab that include multicast storms, sACN failover, and power cycling. Verify that the node re-acquires its address and re-joins the network within the time window acceptable to FOH. Stress-test RDM discovery across many devices and observe any discovery delays. Record rejoin latencies and add them to the deployment acceptance criteria.

Can I update firmware from FOH during load-in?

Avoid firmware updates from FOH during load-in unless absolutely unavoidable. Load-in is a high-risk period with many moving parts. If you must update, limit changes to nonessential fixtures and have an immediate rollback method and a team member dedicated to watching the network and console for regressions.

How do I ensure backup console continuity after firmware changes?

Test the backup console during rehearsal with the same firmware profile and network topology. Confirm that fixture addressing and channel mapping are identical, and verify that cue playback and executor behavior match FOH. Maintain synchronized showfiles and a documented failover procedure detailing which consoles take priority and how to perform a controlled switchover.

What documentation should I deliver to operations after rollout?

Provide a deployment log containing firmware versions, device serials, risks encountered, and recovery steps. Include updated network diagrams, a rollback image repository, and a short support playbook for FOH and stage crew that lists immediate actions for common failures observed during the rollout.